Data Science reflects Big Data and Intelligence

Image by: Devian Art Daniele Gay

I can visualize a time in the future when we will be to robots as dogs are to humans.

Claude Shannon

We live in the loudest and most networked epoch of our world and it is of no surprise that intelligence grounds and knowledge formulation have a lot changed and continue to be. Because when our environment is changing, so do we people. When industrial revolution changed our understanding and relationship to work, we traded our autonomy for steady income.

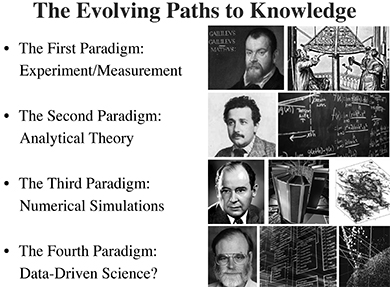

with the ubiquitous data creation arose the entering in a new scientific age, called the 4th industrial revolution, as predicted by Jim Gray (2009). It describes the rapid change of science as a result of the collection and analysis of the vast amounts of data, also called Big Data.

As cliché as it sounds big data both redefines our ecosystem and is the product of our ecosystem. Just as Fordism produced a new epoch in our society, big data is going to reform reality perception, constitution of knowledge, functionality of memory, the habit of creativity, and language or culture experience.

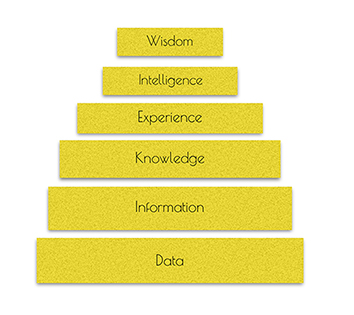

In order to feed this area with wisdom and imagination, it is important to firstly illustrate its relationship to knowledge and intelligence. Afterwards, we could search the synergy between big data and data science.

Image Source: Caltech-JPL Summer School on Big Data Analytics

In this post, you’ll read about:

- What is data science?

- Why is big data debated in relation to with the epistemic of knowledge production and data science?

- How cognitive computing relates to the ‘skill’ of intelligence?

- What is the dark side of Artificial Intelligence?

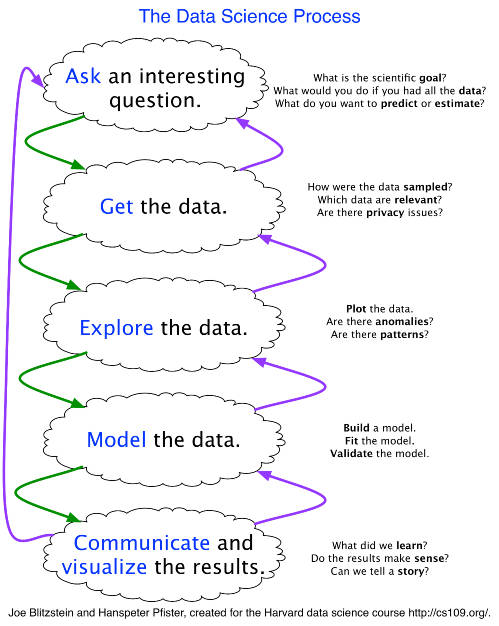

Image by Joe Blitzstein & Hanspeter Pfister

Data- whether it is geographical information, weather data, research data, transport data, energy consumption data, mobile data, sensor data, or health data - has become a fundamental component of our economy. Such data allow us to ask new questions and make new discoveries. What we can learn from data was considered until now mostly a statistician’s question. Though, data management and analytics carried out in conventional statistics cannot address the Big Data challenges: data size is too large, messy datasets are huge and irregular, the data structure is heterogeneous, there is a variety of data representations, values are modified rapidly and their dimensionality diverse.

Thus, the mind-set required to draw insight from a piece of data in no case involves only the basic statistical techniques rather also bayesian statistics, mathematics’ concepts like linear algebra and computer science skills. In addition to that, domain knowledge either this is business, biology or another domain is an equal necessity. In a sentence, data science needs the intersection of mathematics, statistics, computer science and the domain knowledge episteme.

Data science is intertwined with two kinds of intelligence: artificial, and human. Both derive from data processing. Provided that intelligence is not just an intrinsic characteristic, it can be developed by applying the three components of a growth-mind set 1, commitment to practice 2 and the definition of the desired purpose3. Let’s say that a growth-mind in artificial intelligence is termed as contextual feature engineering or parameter optimization, the commitment to practice as the training phase, and the the desired purpose as either the accuracy phase or the output of a product(engineer computing) or prediction (information processing).

Two types of knowledge: “bastard” (subjective and insufficient knowledge, obtained by perception through the senses), and “legitimate (genuine knowledge obtained by the processing of this unreliable “bastard” knowledge using inductive reasoning). - Democritus

AI foundations include mathematics, logic, philosophy, probability, linguistics, neuroscience, and decision theory. AI agents have the ability to comprehend, reason and learn about how the world works and hence acquire further capabilities from mere logical computations. These abilities can developed via combining machine learning, reasoning, natural language processing, speech recognition, computer vision, to name but just a few. In language understanding and reasoning, recently, agents immitate the negotiation proceess. They learnt to negotiate using as training data a collection of negotiations between people and chose the utterance by reinforcement learning7.

Even if somebody can give you a reasonable-sounding explanation [for his or her actions], it probably is incomplete, and the same could very well be true for AI.

It might just be part of the nature of intelligence that only part of it is exposed to rational explanation. Some of it is just instinctual, or

subconscious, or inscrutable.

Jeff Clune

Cognitive computing on the other hand is AI systems where there is human computer interaction in order the agent to mimic the functioning of the human brain and thus finally to be able to interact with humans naturally. Understanding user intent, making a conversation and to put simply acting like other fellows do, are some examples of cognitive computing.

And the most sci-fi link in this intelligence chain is the brain computer interface, the direct communication pathway between a wired brain and an external device4.

At an MIT review 5 is pointed out that just as many aspects of human behavior are impossible to be explained in detail, perhaps it won’t be possible for AI to explain everything it does. This is a dark side of AI and deep learning specifically. Because if a machine takes decisions on aspects like medicine, policing, banking, and military defense and we are unable to check how these decisions are made, then we could not validate that each process works ‘properly’. The meaning of properly ranges across the community, for instance how a Tesla car would solve a self-driving crash dilemma differs from LeEco, a Chinese tech company. In addition to that, since machines are learning through discovering patterns in existing data produced by humans, will mimic and reinforce existing biases. A recent research6 shows that gender and race biases are replicated by machines. The authors proved this on human language data by using a statistical machine-learning model trained on a standard corpus of text and a spectrum of known biases (measured by the Implicit Association Test).

A lot of people are saying this is showing that AI is prejudiced. No. This is showing we’re prejudiced and that AI is learning it,

Joanna Bryson

Technology revealed inexistent experiences of our perception of reality and has changed the lens through which we view the world. Many people think technology as a disruption of social relations, a reducer of creativity and big data as a troubling manifestation of Big Brother. Faults in algorithms, inaccurate hypotheses, biases on data and increased invasions of privacy can dramatically harm our reality.

A typical example of Big Data wrangling, constitutes the paradigm of “Google FluTrends” which was the first application of big data in the public health field. GFT was for digital prognostic of flu spread which failed catching the 2009 pandemic H1N1 because of algorithmic issues.

But the validatation of of the output correctness generated by machine, is yet an open issue and when solved, would be considered a breakthrough.

In outline technology is neither good nor bad; nor is it neutral.

In this way Big Data and AI are just tools that we should shape with care and not let the tools shape us.

New materials are not necessarily superior but each material is only what we make it be,

Mies van der Rohe

Thank You for Reading & Keep Practising